Cloud Jewels: Estimating kWh in the Cloud

Etsy has been increasingly enjoying the perks of public cloud infrastructure for a few years, but has been missing a crucial feature: we’ve been unable to measure our progress against one of our key impact goals for 2025 -- to reduce our energy intensity by 25%. Cloud providers generally do not disclose to customers how much energy their services consume. To make up for this lack of data, we created a set of conversion factors called Cloud Jewels to help us roughly convert our cloud usage information (like Google Cloud usage data) into approximate energy used. We are publishing this research to begin a conversation and a collaboration that we hope you'll join, especially if you share our concerns about climate change.

This isn’t meant as a replacement for energy use data or guidance from Google, Amazon or another provider. Nor can we guarantee the accuracy of the rough estimates the tool provides. Instead, it's meant to give us a sense of energy usage and relative changes over time based on aggregated data on how we use the cloud, in light of publicly-available information.

A little background

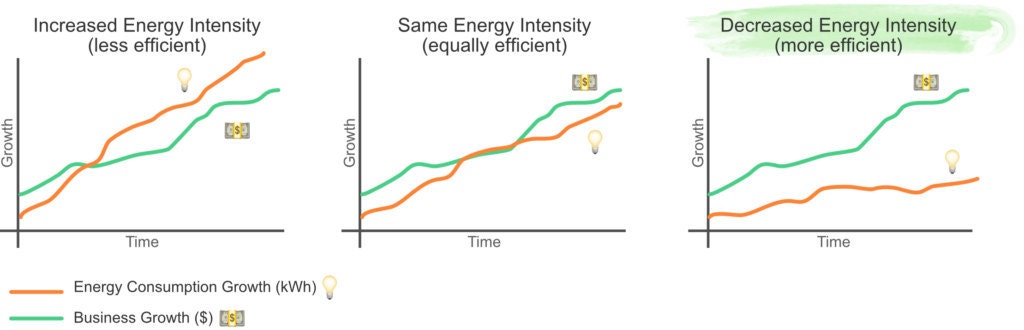

In the face of a changing climate, we at Etsy are committed to reducing our ecological footprint. In 2017, we set a goal of reducing the intensity of our energy use by 25% by 2025, meaning we should use less energy in proportion to the size of our business. In order to evaluate ourselves against our 25% energy intensity reduction goal, we have historically measured our energy usage across our footprint, including the energy consumption of servers in our data centers.

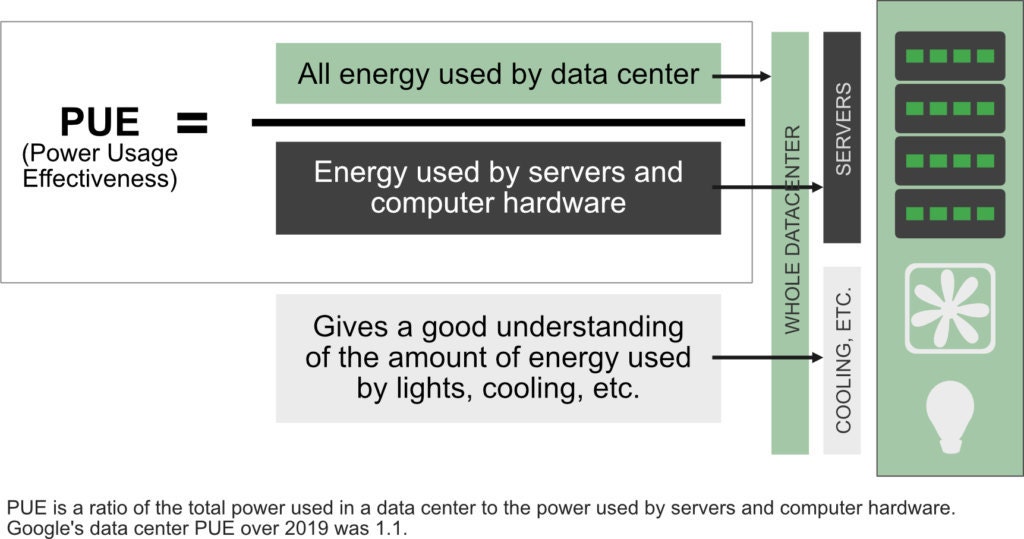

In early 2020, we finished our two-year migration from our own physical servers in a colocated data center to Google Cloud. In addition to the massive increase in the power and flexibility of our computing capabilities, the move was a win for our sustainability efforts because of the efficiency of Google’s data centers. Our old data centers had an average PUE (Power Usage Effectiveness) of 1.39 (FY18 average across colocated data centers), whereas Google's data centers have a combined average PUE of 1.10. PUE is a ratio of the total amount of energy a data center uses to how much energy goes to powering computers. It captures how efficient factors like the building itself and air conditioning are in the data center.

While a lower PUE helps our energy footprint significantly, we need to be able to measure and optimize the amount of power that our servers draw. Knowing how much energy each of our workloads uses helps us make design and code decisions that optimize for sustainability. The Google Cloud team has been a terrific partner to us throughout our migration, but they are unable to provide us with data about our cloud energy consumption. This is a challenge across the industry: neither Amazon Web Services nor Microsoft Azure provide this information to customers. We have heard concerns that range from difficulties attributing energy use to individual customers to sensitivities around proprietary information that could reveal too much about cloud providers' operations and financial position.

We thought about how we might be able to estimate our energy consumption in Google Cloud using the data we do have: Google provides us with usage data that shows us how many virtual CPU (Central Processing Unit) seconds we used, how much memory we requested for our servers, how many terabytes of data we have stored for how long, and how much networking traffic we were responsible for.

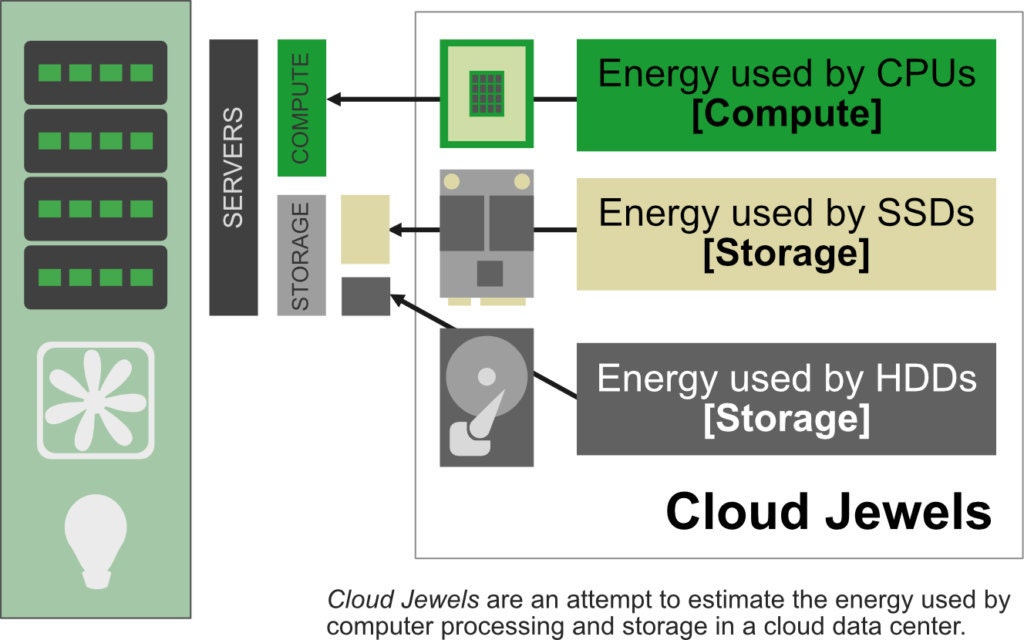

Our supposition was that if we could come up with general estimates for how many watt-hours (Wh) compute, storage and networking draw in a cloud environment, particularly based on public information, then we could apply those coefficients to our usage data to get at least a rough estimate of our cloud computing energy impact.

We are calling this set of estimated conversion factors Cloud Jewels. Other cloud computing consumers can look at this and see how it might work with their own energy usage across providers and usage data. The goal is to help cloud users across the industry to help refine our estimates, and ultimately help us encourage cloud providers to empower their customers with more accurate cloud energy consumption data.

Methodology

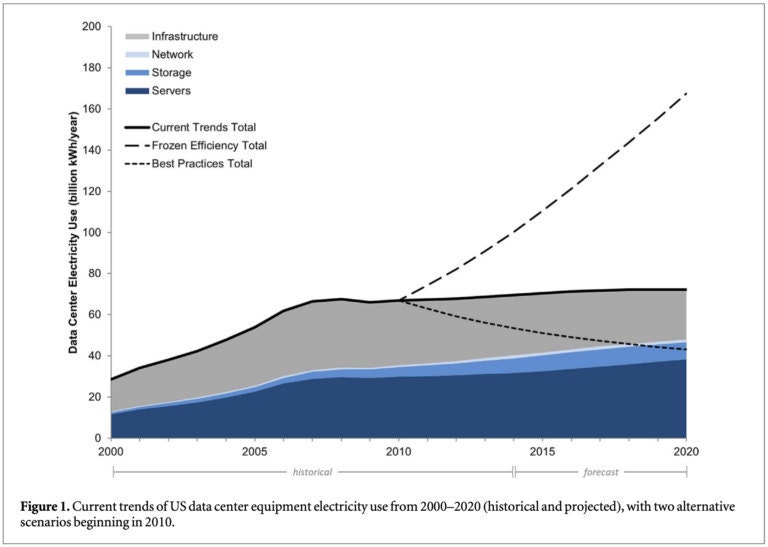

The sources that most influenced our methodology were the U.S. Data Center Energy Usage Report, The Data Center as a Computer, and the SPEC power report. We also spoke with industry experts Arman Shehabi, Jon Koomey, and Jon Taylor, who suggested additional resources and reviewed our methodology.

We roughly assumed that we could attribute power used to:

- running a virtual server (compute),

- memory (RAM),

- storage, and

- networking.

Using the resources we found online, we were able to determine what we think are reasonable, conservative estimates for the amount of energy that compute and storage tasks consume. We are aiming for a conservative over-estimate of energy consumed to make sure we are holding ourselves fully accountable for our computing footprint. We have yet to determine a reasonable way to estimate the impact of RAM or network usage, but we welcome contributions to this work! We are open-sourcing a script for others to apply these coefficients to their usage data, and the full methodology is detailed in our repository on Github.

Cloud Jewels coefficients

The following coefficients are our estimates for how many watt-hours (Wh) it takes to run a virtual server and how many watt-hours (Wh) it takes to store a terabyte of data on HDD (hard disk drive) or SSD (solid-state drive) disks in a cloud computing environment:

2.10 Wh per vCPUh [Server]

0.89 Wh/TBh for HDD storage [Storage]

1.52 Wh/TBh for SSD storage [Storage]

On confidence

As you may note: we are using point estimates without confidence intervals. This is partly intentional and highlights the experimental nature of this work. Our sources also provide single, rough estimates without confidence intervals, so we decided against numerically estimating our confidence so as to not provide false precision. Our work has been reviewed by several industry experts and our energy and carbon metrics for cloud computing have been assured by PricewaterhouseCoopers LLP. That said, we acknowledge that this estimation methodology is only a first step in giving us visibility into the ecological impacts of our cloud computing usage, which may evolve as our understanding improves. Whenever there has been a choice, we have erred on the side of conservative estimates, taking responsibility for more energy consumption than we are likely using to avoid overestimating our savings. While we have limited data, we are using these estimates as a jumping-off point and carrying forth in order to push ourselves and the industry forward. We especially welcome contributions and opinions. Let the conversation begin!

Server wattage estimate

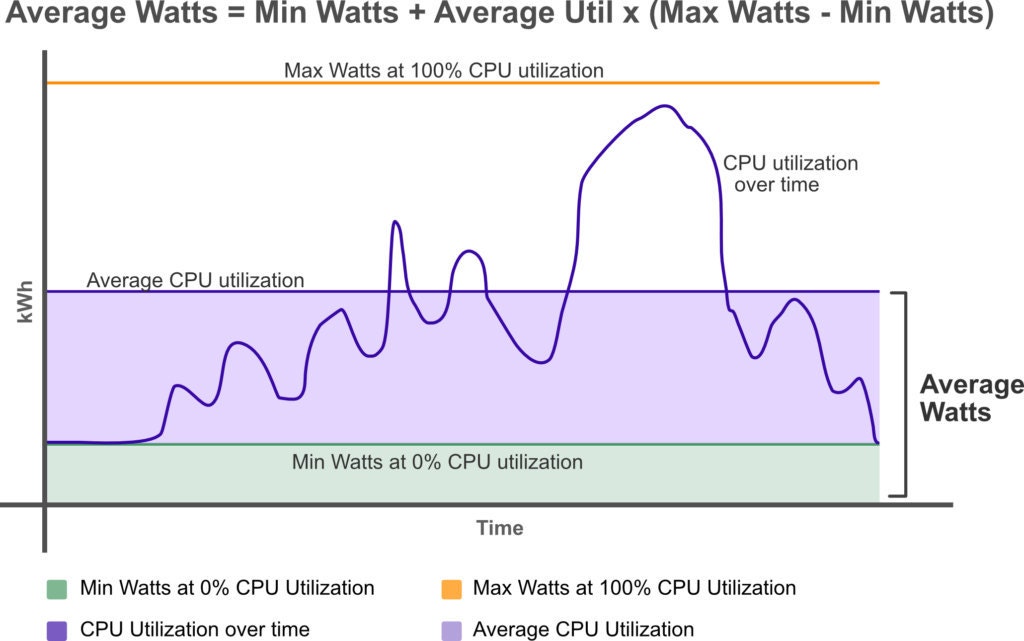

At a high level, to estimate server wattage, we used a general formula for calculating server energy use over time:

*W = Min + Util(Max - Min)**

Wattage = Minimum wattage + Average CPU Utilization * (Maximum wattage - minimum wattage)

To determine minimum and maximum CPU wattage, we averaged the values reported by manufacturers of servers that are available in the SPEC power database (filtered to servers that we deemed likely to be similar to Google's servers), and we used an industry average server utilization estimate (45%) from the US Data Center Energy Usage Report.

Storage wattage estimate

To estimate storage wattage, we used industry-wide estimates from the U.S. Data Center Usage Report. That report contains estimated average capacity of disks as well as average disk wattage. We used both those estimates to get an estimated wattage per terabyte.

Networking non-estimate

The resources we found related to networking energy estimates were for general internet data transfer, as opposed to intra data center traffic between connected servers. Networking also made up a significantly smaller portion of our overall usage cost, so we are assuming it requires less energy than compute and storage. Finally, as far as the research we found indicated, the energy attributable to networking is generally far smaller than that attributable to compute and storage.

Application to usage data

We aggregated and grouped our usage data by SKU then categorized it by which type of service was applicable ("compute", "storage", "n/a"), converted the units to hours and terabyte-hours, then applied our coefficients. Since we do not yet have a coefficient for networking or RAM that we feel confident in, we are leaving that data out for now. The experts we have consulted with are confident that our coefficients are conservative enough to account for our overall energy consumption without separate consideration for networking and RAM.

Results

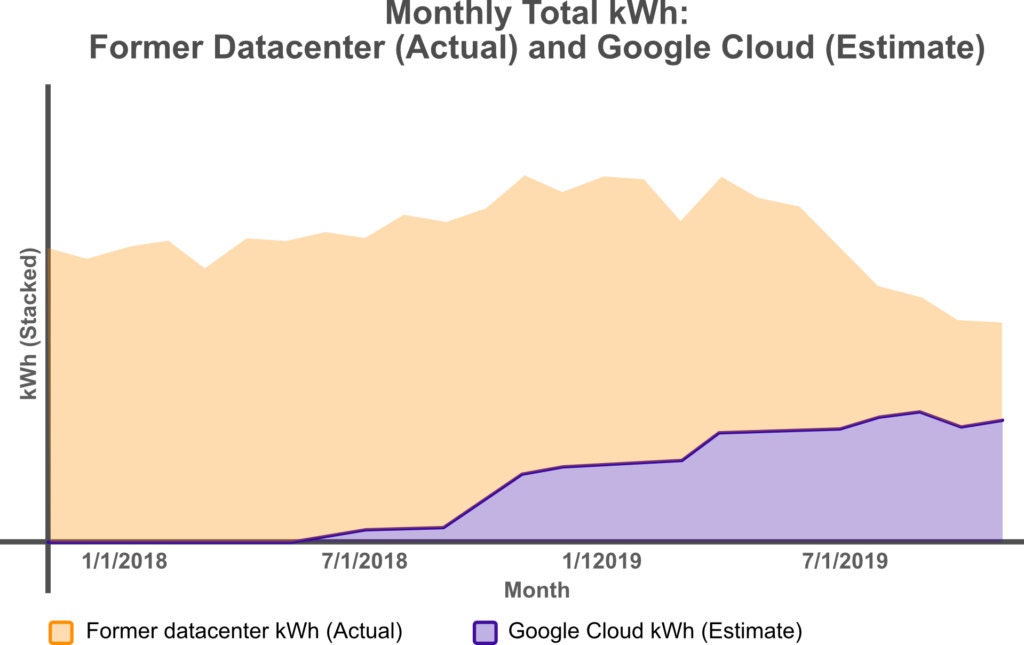

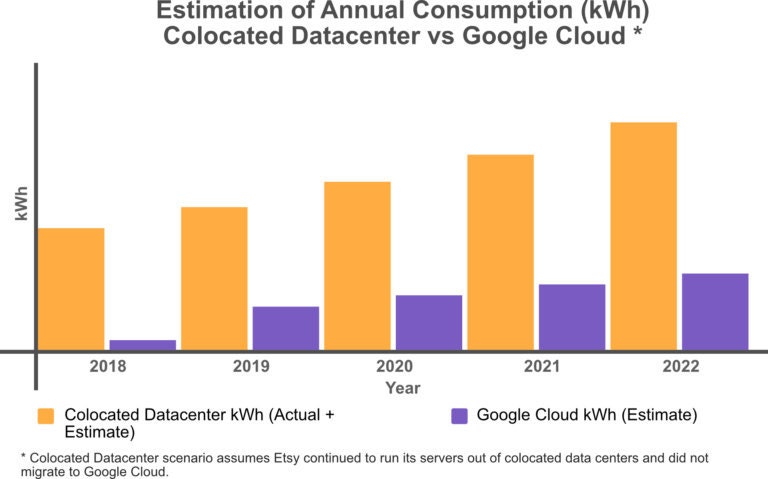

Applying our Cloud Jewels coefficients to our aggregated usage data and comparing the estimates to our former local data center actual kWh totals over the past two years indicates that our energy footprint in Google Cloud is smaller than it was on premises. It’s important to note that we are not taking into account networking or RAM, nor Google-maintained services like BigQuery, BigTable, StackDriver, or App Engine. However, overall, relatively speaking over time (assuming our estimates are even moderately close to accurate and verified to be conservative), we are on track to be using less overall energy to do more computing than we were two years ago (as our business has grown), meaning we are making progress towards our energy intensity reduction goal.

We used historical data to estimate what our energy savings are since moving to Google Cloud.

Our estimated savings over the five year period are roughly equivalent to:

- ~20,000 sewing machines (running 24/7)

- ~147,000 light bulbs (running 24/7)

- ~1,200 dishwashers (running 24/7)

Next steps

We would next like to find ways to estimate the energy cost of network traffic and memory. There are also minor refinements we could make to our current estimates, though we want to ensure that further detail does not lead to false precision, that we do not overcomplicate the methodology, and that the work we publish is as generally applicable and useful to other companies as possible.

Part of our reasoning for open-sourcing this work is selfish: we want input! We welcome contributions to our estimates and additional resources that we should be using to refine them. We hope that publishing these coefficients will help other companies who use cloud computing providers estimate their energy footprint. And finally we hope that efforts and estimations encourage more public information about cloud energy usage, and particularly help cloud providers find ways to determine and deliver data like this, either as broad coefficients for estimation or actual energy usage metrics collected from their internal monitoring.