October 2012 Site Performance Report

It’s been about four months since our last performance report, and we wanted to provide an update on where things stand as we go into the holiday season and our busiest time of the year.Overall the news is very good!

Server Side Performance

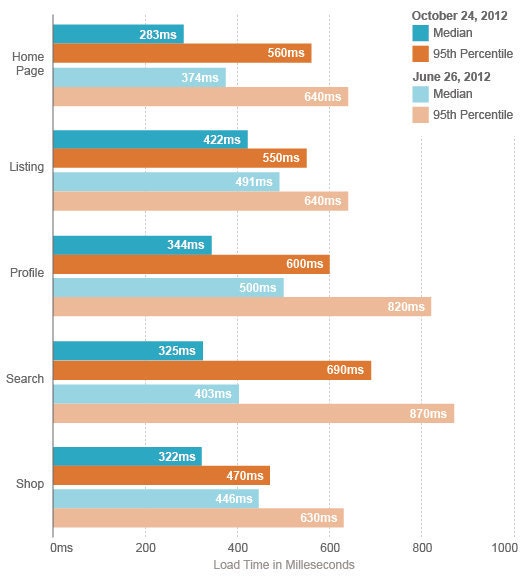

Here are the median and 95th percentile load times for core pages, Wednesday 10/24/12:

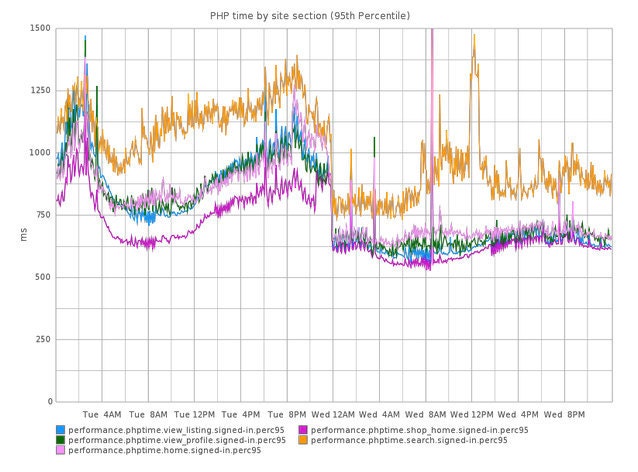

As you can see, load times declined significantly across all pages. A portion of this improvement is due to ongoing efforts we are making in Engineering to improve performance in our application code. The majority of this dip, however, resulted from upgrading all of our webservers to new machines using Sandy Bridge processors. With Sandy Bridge we saw not only a significant drop in load time across the board, but also a dramatic increase in the amount of traffic that a given server can handle before performance degrades. You can clearly see when the cutover happened in the graph below:

This improvement is a great example of how operational changes can have a dramatic impact on performance. We tend to focus heavily on making software changes to reduce load time, but it is important to remember that sometimes vertically scaling your infrastructure and buying faster hardware is the quickest and most effective way to speed up your site. It’s a good reminder that when working on performance projects you should be willing to make changes in any layer of the stack.

Front-end Performance

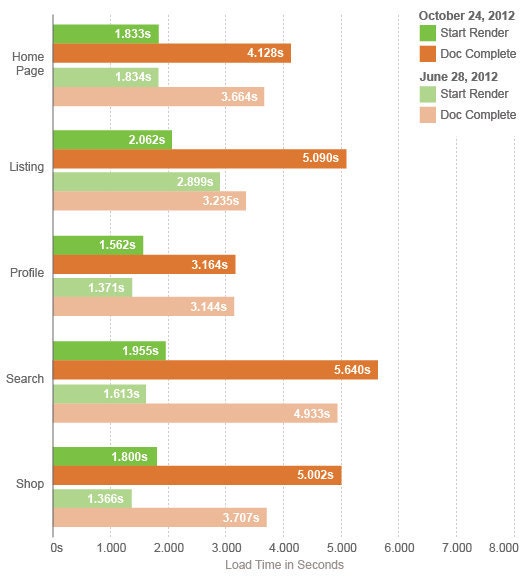

Since our last update we have a more scientific way of measuring front-end performance, using a hosted version of WebPagetest. This enables us to run many synthetic tests a day, and slice the data however we want. Here are the latest numbers, gathered with IE8 from Virginia over a DSL connection as a signed in Etsy user:

These are median numbers across all of the runs on 10/24/12, and we run tests every 30 minutes. Most of the pages are slower as compared to the last update, and we believe that this is due to using our hosted version of WebPagetest and aggregating many tests instead of looking at single tests on the public instance. By design, our new method of measurement should be more stable over the long term, so our next update should give a more realistic view of trends over time.

You might be surprised that we are using synthetic tests for this front-end report instead of Real User Monitoring (RUM) data. RUM is a big part of performance monitoring at Etsy, but when we are looking at trends in front-end performance over time, synthetic testing allows us to eliminate much of the network variability that is inherent in real user data. This helps us tie performance regressions to specific code changes, and get a more stable view of performance overall. We believe that this approach highlights elements of page load time that developers can impact, instead of things like CDN performance and last mile connectivity which are beyond our control.

New Baseline Performance Measurements

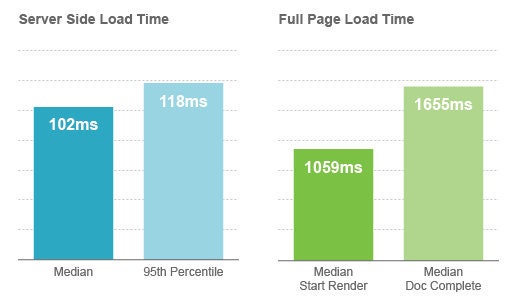

Another new thing we created is an extremely basic page that allows us to track the lower limit on load time for Etsy.com. This page just includes our standard header and footer, with no additional code or assets. We generate some artificial load on this page and monitor its performance. This page represents the overhead of our application framework, which includes things like our application configuration (config flag system), translation architecture, security filtering and input sanitization, ORM, and our templating layer. Having visibility into these numbers is important, since improving them impacts every page on the site. Here is the current data on that page:

Over the next few months we hope to bring these numbers down while at the same time bringing the performance of the rest of the site closer to our baseline.