Performance tuning syslog-ng

You may have noticed a theme in many of our blog posts. While we do push the technology envelope in many ways, we also like to stick to tried, true and data driven methods to help us keep us sane.

One of the critical components of our infrastructure is centralised logging. Our systems generate a vast amount of logging, and we like to keep it all. Everything. The whole bag.

When faced with similar situations, other companies have embraced a lot of new technology:

- Flume

- Scribe

- Logstash

At Etsy, we've stayed old-school - practically prehistoric by technology standards. Our logging infrastructure still uses syslog as the preferred transport because it Just Works™.

...Mostly.

Syslog-ng is a powerful tool, and has worked well, as long as we've paid a little attention to performance tuning it. This a collection of our favourite syslog-ng tuning tips.

Our central syslog server, an 8-core server with 12Gb RAM, currently handles around 60,000 events per second at peak at ~25% CPU load.

Rule ordering

If, like us, you have a central syslog server with all of your hosts sending their vital information (whether it be system logs, Apache logs, Squid logs, or anything else) you will probably have a lot of filters set up to match certain hostnames, and certain log lines so you can get the logs into their correct places.

For example, you may be trying to find all Apache access log lines across all of your webservers, so you end up with all the logs in a single file destination (something like /log/web/access.log perhaps)

This feature is pretty widely used, and extremely powerful in the sorting of your logs but it’s also very power consuming in terms of CPU time. Regex matching especially has to check every log line event that comes in, and when you’re pushing tens of thousands of them, it can begin to hurt.

But, in the case of our Apache access log example, you would use the “flags(final)” attribute in order to tell syslog-ng to stop processing that line, so it doesn’t even have to check the other regexes. That is all well and good, but have you considered internally what order syslog-ng is checking those match statements?

For example, we use Chef pretty extensively at Etsy to automate a lot of things; syslog-ng is one of them. Each machine role has it's own syslog-ng definitions, and our Chef recipes automatically generate both the client and server config for use with that role. To do this, we template out the configuration, and drop the files into /etc/syslog-ng.d/, which is included from the main syslog-ng.conf.

Now, if your biggest traffic (log wise) happens to be for your webservers, and your config file ends up being called "web.conf", syslog-ng will quite happily parse all your configs and when it compiles it's rules, the config that checks to see if the line is from Apache will end up at the end of the list. You are potentially running tens if not hundreds of other match statements and regexes for no reason what so ever for the bulk of your traffic.

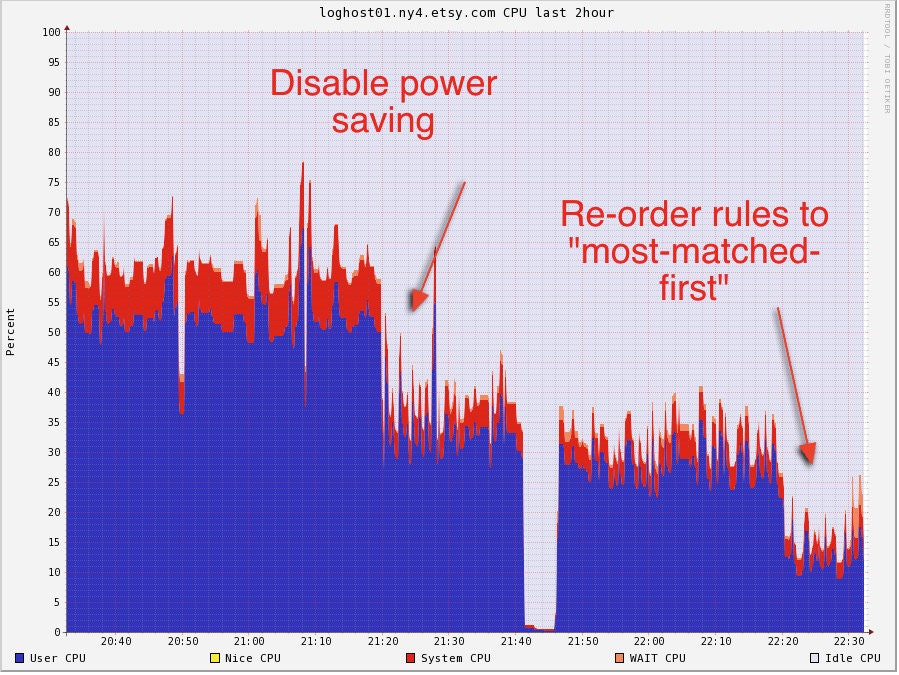

Luckily the fix is extremely simple: If using one config file, keep the most used rules at the top. If you use syslog-ng.d, keep your most used rules in a file that begins with a "0-" to force it to the top of the list. This tiny change alone halved the CPU we were using on our syslog-ng server.

tl;dr: Make sure syslog-ng is parsing the most frequently logged lines first, not last.

Log windows and buffer sizing

Math time! Syslog-ng has a great feature called "flow control" - when your servers and applications send more traffic than syslog-ng can handle, it will buffer the lines in memory until it can process them. The alternative would be to drop the log lines, resulting in data loss.

Four variables associated with flow control that we will look at, are:

log_fifo_size- The size of the output buffer. Each destination has its own one of these. If you set it globally, one buffer is created for each destination, of the size you specify.log_iw_size- The initial size of the input control window. Syslog-ng uses this as a control to see if the destination buffer has enough space before accepting more data. Applies once to each source (not per-connection!)log_fetch_limit- The number of messages to pull at a time from each source. Applies to every connection of the source individually.max_connections- The maximum number of TCP connections to accept. You do use TCP, don't you?!

Each of your servers shouldn't open more than one TCP connection to your central syslog server, so make sure you set max_connections to more than that number.

After that, it's time to break out your calculator and commit these equations to memory:

log_iw_size = max_connections * log_fetch_limit

log_fifo_size = log_iw_size * (10~20)There is some variance on how you calculate log_fifo_size which you will have to experiment with. The principle is this:

Syslog-ng will fetch at most

log_fetch_limit * max_connectionsmessages each time it polls the sources. Yourlog_fifo_sizeshould be able to hold many polls before it fills up. When your destination (file on disk? another syslog server?) is not able to accept messages quickly enough, they will accumulate in thelog_fifobuffer, so make this BIG.

log_fifo_size = (log_iw_size = max_connections log_fetch_limit) 20

Experiment with disabling power saving features

At Etsy, we take pride in trying to do our bit for the planet. It's what our business is built on, trying to encourage good behaviours around recycling, and re-using precious resources. It's why we announced earlier this year that we are now a member of the B-Corp movement so we can measure our success in giving back to the world in various ways. One of the criteria for this involves how much energy we use as an organisation; a tough subject when you have a datacenter which are well known for using huge amounts of power. Because of this, we pride ourselves in working with vendors that also care about their power footprint, and being able to squeeze the most savings without effecting performance.

However, this isn't to say that, in particular, power saving modes provided with servers are perfect. We have hundreds of servers that can scale back their CPUs and other devices, saving power with absolutely 0 measured effect to performance or any other metric (and, believe us, we tested). There are two recorded cases where this hasn't worked out well for us.

Briefly, the principle behind power saving in servers is to "scale back" (that is, to decrease the speed and thus power usage) of CPU performance when the server doesn't need it, and with many modern machines having multiple CPUs they can often be the biggest power draw, so the savings involved here are huge.

What if your server demands a lot of CPU power? Well that's no problem, the CPU can scale back up to full speed instantly, with basically no effect to the response time.

But what if your server does a lot of context switching, or has very bursty CPU usage? Two prime examples of this are Nagios, and syslog-ng. Nagios, for example, has to spin up processes to execute checks in a very spiky manner, and to add to this there are sometimes hundreds of them at once, so the cost of switching between doing tasks in all those processes (even if the time of each individual one on it's own is tiny) is huge (this is known as context switching). A similar thing happens with syslog-ng, wher the incoming log events are very bursty, so the CPU is actually spending more time scaling back and fourth than doing our processing.

In these two instances, we switched from power saving mode to static full power mode, and the amount of CPU consumed halved. More importantly, that CPU shows up as system time; time wasted context switching and waiting for other events is suddenly reduced dramatically, and all CPU cores can operate on whatever events are needed as soon as possible.

There are some great tools that allow you to watch your CPU scaling in action, for example i7z which is a great command line tool (and UI, if you fancy) that is easy to get running and gives you a great view into the inner workings of Intel CPUs.

The important point here is that we would've actually purchased a more power hungry machine to scale with our log traffic if we hadn't have found this, somewhat defeating the purpose of the power saving feature in the first place.

tl;dr: Experiment with power settings on your server, unlock their full potential, if it makes no difference then put it back.

Summary

In total these changes took about 5 days of research and testing, and 1/2 day of effort to implement. We were regularly hitting the limits of what this servers was capable of processing before making these changes and had considered buying much larger servers and moving to other methods of log aggregation.

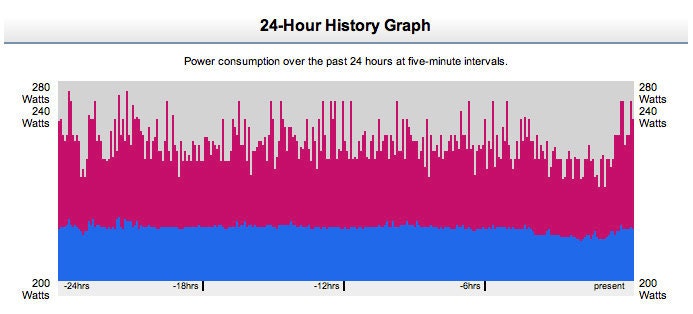

A small dose of profiling and planning has reduced the load on the server to 1/5th, reduced our power consumption and improved the efficiency of the whole system:

This post was co-written by Avleen Vig (@avleen) and Laurie Denness (@lozzd) battling to solve hard (and sometimes easy) problems to make operations better for everyone. Why not come and help us?